Win-Loss Playbook

What 500,000 Deals Taught Us About Win-Loss Programs

A playbook for GTM teams who want coverage, accuracy, and insights that actually flow. Illustrated with examples.

Enter your work email to read the full playbook. No spam.

Why Most Win-Loss Programs Fail

Win-loss sounds simple. Find out why you lost. Track it. Fix it.

The reality is most programs never get close to that.

1. The accuracy problem

The data you do collect is compromised before it reaches you.

CRM data quality — share of closed deals (n=500k)

Reps log loss reasons after deals they would rather forget. "Pricing" is the most common entry in most CRMs. It is also the least accurate. Roughly 33% of CRM loss reasons show significant mismatch with what buyers actually said.

Not because reps are lying, but because most loss reasons and decision drivers aren't explicitly discussed with your sales team. Just because pricing was the last topic your reps discussed, doesn't mean that's where the deal was lost.

Call transcripts have a similar problem. They capture everything said to your reps, and in some cases can help us tease out the true loss reason. But frequency is not causality. Knowing that "integration" came up in 60% of lost deals does not tell you whether integration drove the decision or was a passing mention.

2. The coverage problem

Traditional win-loss programs sample. A vendor runs interviews on 10 deals a quarter. An in-house analyst covers what they can. The result: decisions made on anecdotes, not patterns.

Less than 5% of closed deals get analyzed in a typical enterprise win-loss program. That means 95% of what your market is telling you goes unheard.

3. The speed problem

By the time a traditional win-loss program delivers findings, the deals are ancient history. Reps have moved on. The competitive landscape has shifted. The insight arrives too late to change anything.

86 days is the average time from deal close to insight delivery in a traditional win-loss program.

4. The distribution problem

Even programs that work well on the research side tend to fail on delivery. Findings live in a slide deck or a quarterly report. Sales never sees them. Product gets a summary. Competitive intel updates battlecards manually, months after the data was collected.

A win-loss program that does not flow into rep behavior, product decisions, and positioning work is an expensive research exercise.

5. The budget problem

Getting a win-loss program approved is its own obstacle.

The pitch is hard. You are asking for budget to interview buyers who already left. The output is a report. The timeline is months. And the last program someone tried did not change much.

Traditional win-loss vendors charge $25,000 to $100,000 per project. For that you get a point-in-time snapshot, a slide deck, and a follow-up call. It is easy to see why finance says no.

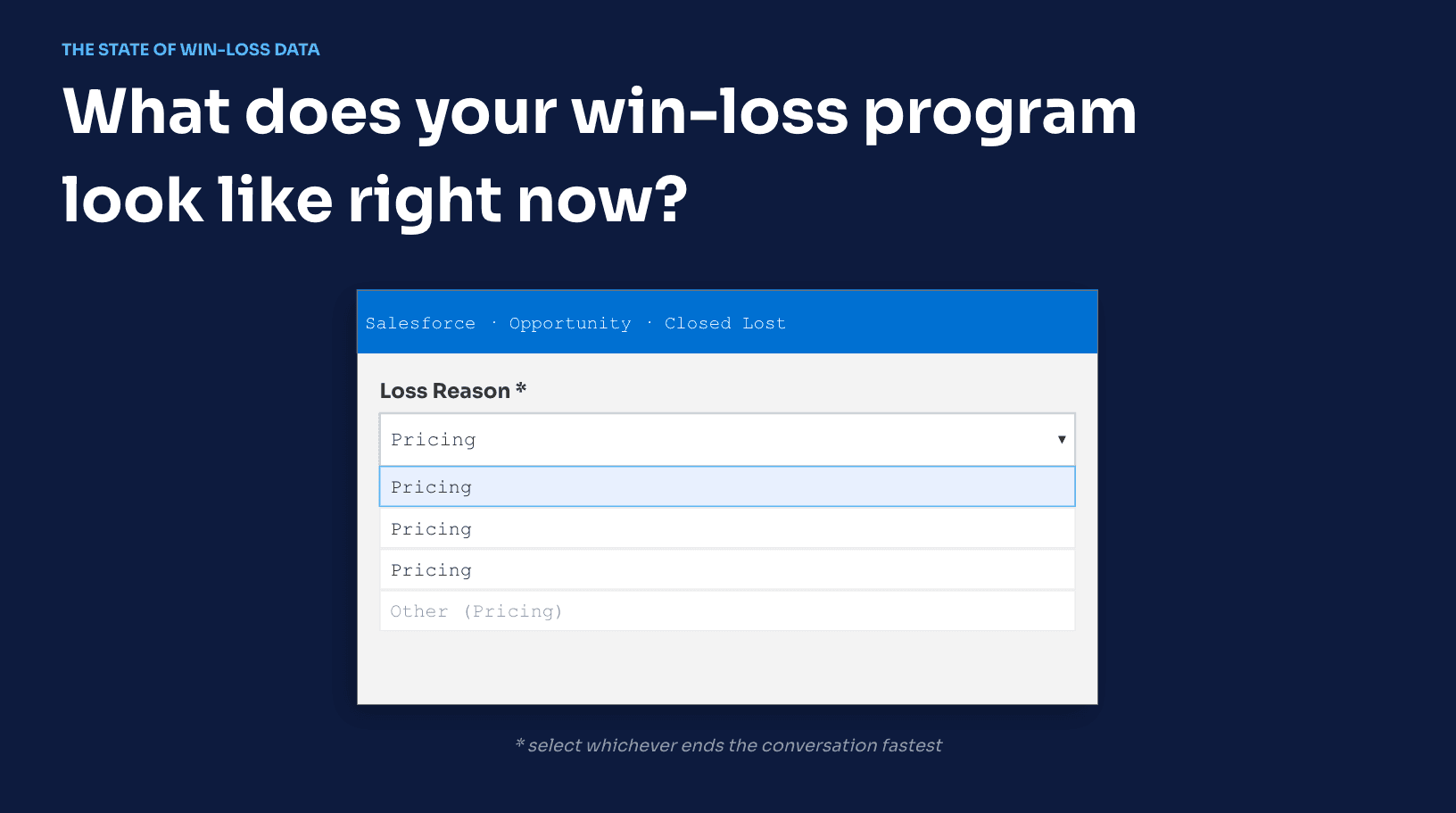

So most teams either skip win-loss entirely or build something lightweight in-house. A CRM dropdown menu and a chart in a slide deck. One Product Marketer doing their best with Gong and the occasional survey. Coverage stays low. Confidence stays low. The program quietly dies.

Why it matters

The win-loss challenges of yesterday no longer apply. With AI, we have the ability to solve the logistical challenge and deliver on the promise of Win-Loss Analysis that so many teams have missed out on.

The teams that get win-loss right do not treat it as a research project. They treat it as a revenue input. The program does not justify itself with a quarterly report. It justifies itself when a rep wins a deal because they knew exactly how to handle the integration objection. When product prioritizes the right roadmap item. When a battlecard reflects what buyers actually said last month, not last year.

For those of you considering building a win-loss program, run a quick calculation:

- how much revenue is sitting in our pipeline today?

- how many of these deals could we win with better insights?

Note that teams that operationalize win-loss analysis properly win anywhere from 5%-15% more of their "winnable deals".

A 1% improvement in win rate on $10M in pipeline is $100,000 in revenue. A 5% improvement is $500,000. The deals you are losing right now are telling you exactly what to fix. You are just not collecting it in a way that lets you act on it.

That is the version worth building. The rest of this playbook shows you how.

“We used to rely on CRM notes, which are not reliable. It wasn't a full picture of what we were trying to learn from our lost and won deals.”

What Fields to Track

Most win-loss programs start and fail due to structural limitations in their data model.

A few small, structural fixes to the data you're collecting for each deal can make a huge difference.

The Problem

Most teams capture what is easy (to get reps to fill out), not what is useful.

You might think of CRM-tracked win-loss data as the"Loss Reasons" dropdown menu in Salesforce, or a "Loss Reasons Notes" field.

It's important to note that **win-loss data is broader than just that loss reason. It's who you competed against, what product capabilities influenced the decision, how pricing fit, sales execution, and a variety of other factors impacted the buyer.

The result of most basic win-loss programs is a limited, inaccurate picture that can tell you what happened but not why - and only to a limited audience. Or worse, one that can tell you why but only in prose you cannot aggregate.

A useful deal record has two things running in parallel for every field that matters: a structured value for aggregation and a text field for the nuance behind it. Strip either one and you lose half the value.

Here is how to build it.

The Data Model

Decision Drivers

This is the most important part of your data model. It is also the most commonly botched.

Most deals are decided by three to four factors. Each one needs three things: a label, a weight, and a description.

Label: A specific, named driver from a shared taxonomy. Not "pricing." Not "product." Something precise enough to aggregate across deals and still mean the same thing to two different analysts.

Good examples:

- Usage-based pricing uncertainty

- Pricing complexity

- Missing SSO

- HRIS connector gap

- Champion lost internal support

- Implementation timeline concern

Broad categories like "Pricing" or "Product" are useful for filtering. The specific driver is what produces insight. "We lost 14 deals to pricing concerns last quarter" tells you nothing. "11 of those 14 cited usage-based pricing uncertainty specifically" tells you what to fix.

Hindsight maintains a driver library organized by category and common industry patterns. Use it as a starting point and extend it for your business. The goal is a taxonomy that is consistent enough to aggregate but specific enough to act on.

Weight: Each driver gets a percentage influence on the decision. Drivers across a deal should sum to 100%.

This changes how you read the data entirely. A deal where pricing was tagged at 8% influence is a very different deal from one where pricing was tagged at 52%, even if both have "pricing" as a listed driver. Aggregate the weights and you get a map of what is actually driving decisions in your market, not just what came up.

Description: A short text field. What specifically happened with this driver in this deal? What did the buyer say? What did the rep observe? This is the field that explains an outlier, captures a quote, and gives future readers the context the structured fields cannot.

Competitors

Track every vendor in the evaluation, not just the one that won.

Each competitor entry needs:

Competitor name

Type:

- Incumbent (already in the account)

- Primary competitor (main alternative evaluated)

- Other (mentioned, briefly evaluated, or used for price benchmarking)

Outcome:

- Selected (they won)

- Evaluated (seriously considered, not selected)

- Mentioned (came up but not formally evaluated)

Comparison notes: A text field. How did the buyer describe this vendor relative to you? What did they do better? What did they do worse? What specific capabilities or terms came up in comparison?

This last field is the one that actually updates battlecards. "Lost to Competitor A" is a data point. "Buyer said Competitor A had a native QuickBooks connector and offered a 90-day implementation guarantee. We had neither" is something a rep can use tomorrow.

Product

This is the layer that makes your win-loss data useful to product teams, not just GTM.

For every capability that played a role in a deal, capture:

Feature name: Pull from your product taxonomy. Use the same terms your product team uses internally. Consistency matters for aggregation across deals.

Role in deal:

- Gap (buyer needed it, you do not have it)

- Blocker (deal-breaker, explicitly cited)

- Request (buyer asked for it, not a hard requirement)

- Delight (positively influenced the decision)

- Concern (buyer had questions or hesitation around it)

Notes: What specifically did the buyer say? How did it come up? Was it raised by the buyer or surfaced by the rep?

Aggregated across deals, this gives product a ranked, evidence-backed view of what is winning and losing deals. Not a feature request list. Not a support ticket queue. Actual deal outcomes tied to specific capabilities.

Scorecard

After a full deal review, score the deal across five dimensions. These are not entered by reps. They are completed by the analyst or Hindsight after the full record has been reviewed.

- Product Fit (1-5)

- Pricing Fit (1-5)

- Customer Fit (1-5)

- Sales Execution (1-5)

- Competitive Performance (1-5)

Each score gets a short rationale note. Why did this deal score a 2 on sales execution? What specifically happened?

Scores enable KPI tracking over time. Average product fit across lost deals. Sales execution score by rep or segment. Competitive performance against a specific competitor. These are the numbers a VP wants in a quarterly review. They only mean something if the underlying record is complete.

Putting it together

Here is what a complete deal record looks like in practice:

Exec Summary

Meridian Health selected Frontline by Ormandy over Hindsight despite an active evaluation. The $240K ARR deal was lost primarily due to a perceived integration gap with their existing QuickBooks Online setup and concerns about migration risk. The rep marked loss reason as 'pricing' in Salesforce — buyer interviews revealed integration confidence was the real driver.

Decision Drivers

Buyer cited the absence of a native QuickBooks connector as the deciding factor. Three separate calls referenced it unprompted.

Champion flagged the complexity of migrating existing job history. No migration playbook was presented during evaluation.

Ormandy had a two-year relationship with the IT lead. Switching cost was perceived as high even before the demo.

Mentioned once in email. CRM recorded this as the primary loss reason — interviews do not support that conclusion.

Timeline pressure and procurement cycle length contributed marginally.

Scorecard

Competitors

Frontline by Ormandy

WinnerSelected. Had incumbent advantage and a native QuickBooks integration. Positioning focused on low-friction migration.

Manual processes

EvaluatedStatus quo was considered briefly. Dismissed early — not a real competitive threat in this deal.

Features

QuickBooks Integration

Gap3Buyer required a native connector. Hindsight does not have one — this was the deal's turning point.

Service Workflow Automation

Love2Buyer was impressed by the automation demo. Not enough to overcome the integration concern.

Job & Project Management

Request2Buyer asked whether job-level deal tracking was on the roadmap. Rep did not have an answer.

Answers

Decision Maker

Persona

IT Decision MakerPrimary Needs

Native integrations with existing finance and operations stack. Low-friction onboarding with minimal disruption to active service workflows. Confidence in vendor support during migration.

A complete deal record is not a form. It is a structured document with layered depth.

The structured fields handle aggregation. The text fields handle context. The two together handle the question every stakeholder actually asks: what is happening, and why.

For teams using AI to synthesize win-loss data, this structure matters even more. An AI agent reading a flat CRM export gets noise. An AI agent reading a properly structured deal record with labeled drivers, weighted influences, competitor comparisons, and product gaps gets something it can reason over accurately. We will cover how to format records for AI in Section 09.

For now, making small tweaks to your CRM setup can have massive impacts to how useful your win-loss data is at the end of each quarter.

Analyzing Deal Data

Most teams don't have the bandwidth to review their deals.

True root cause analysis requires a level of nuance and detail that simple CRM fields and AI summaries can't solve.

Here's a breakdown of our approach (which we've automated with AI whenever possible).

Start with the timeline

Every deal leaves a trail. Emails. Call recordings. CRM notes. Slack messages. Maybe yours are illustrated in contract redlines and support tickets.

Before you analyze anything, assemble the timeline chronologically.

This matters because deals shift. What the buyer said in discovery is often different from what they said in procurement. A concern that surfaced in week two and never came up again is different from one that reappeared in the final call.

Analyze each data point individually (first-pass summaries)

Before you look at the deal as a whole, look at each source on its own terms.

What does this email say? What does this call reveal about where the buyer's head was at this point in the process? What did the rep note here, and does it match what was actually said?

This step is slow but it's also the step that catches everything important.

When analyzing deals with multiple touchpoints, aggregating everything at once inside one prompt / context window leads AI models to overlook key facts.

Some facts only appear if someone (or an AI) reads the source individually to extract takeaways. We've done tons of testing on this, but we encourage you to test this yourself!

The goal at this stage is not to draw conclusions. It is to extract what each source actually says, flag anything that looks inconsistent, and note what is missing.

Gaps are as important as data

This is where most AI workflows break down. If you aren't explicitly asking the AI to flag what it doesn't know, it will hallucinate confidently.

Buyers do not put everything in writing or say everything on a call. Decisions get made in conversations that were never recorded. A champion's internal advocacy, or lack of it, happens entirely outside your visibility. The moment a competitor's sales rep said the right thing to the right person at the right time does not appear anywhere in your CRM.

These gaps are not just missing data. They are the black box at the center of most lost deals.

Win-loss interviews exist to open that black box. Not because buyers will tell you everything directly. They often will not. But a buyer interview captures what no system records: hesitation in how something is answered, the topics a buyer avoids, the way they describe a competitor that signals something beyond the words. A good interviewer, human or AI, picks up signals that transcripts and CRM data simply cannot.

The rule is straightforward. If your structured sources leave a gap in the deal story, an interview is the only way to fill it with anything verified. Everything else is inference.

Synthesize last (Summary of Summaries)

Once you have reviewed each source individually and identified where the gaps are, synthesize.

"We're also evaluating Ormandy — they have a native QB connector…"

Buyer mentions implementation timeline 3×. Integration not raised.

Rep logged: "Pricing" — entered 4 days after deal close.

"The QuickBooks gap was honestly the deciding factor for our IT lead."

Competitor advantage flagged early — integration gap known to buyer by week 2.

Timeline anxiety present throughout. Integration not surfaced by rep.

"Pricing" as primary driver. No corroborating evidence in other sources.

Integration confidence was the decisive factor. Pricing mentioned once, peripherally.

Lost on integration confidence, not pricing.

Buyer identified the QuickBooks gap as the deciding factor, corroborated by unprompted email references in week 2. CRM loss reason ('Pricing') is not supported by any other source. Rep did not surface the integration concern on the discovery call despite it appearing in email before that call.

What is the most accurate account of what happened in this deal? Where do sources agree? Where do they conflict? Which conflicts can be resolved by a higher-trust source like a buyer interview, and which remain genuinely ambiguous?

The synthesis is the deal record. It is not a transcript dump. It is not a CRM export. It is a structured, sourced account of what actually happened, built from everything you could access and honest about what you could not.

This distinction matters for every team that reads the record later. A product manager acting on a feature gap wants to know if that gap came from a buyer interview or a rep's interpretation of a Gong call. They are not the same thing.

The bandwidth problem

Here is the honest constraint. Doing this well takes time. Reviewing individual sources, flagging conflicts, identifying gaps, running interviews, synthesizing a complete record. For one deal, that might be two to three hours of analyst work.

Most teams close dozens of deals a month. The math does not work.

The answer is not to do less analysis. It is to build a system that does it continuously and automatically, at the deal level, so nothing ages before it gets reviewed.

Deals analyzed within 48 hours of close produce dramatically more accurate records than deals analyzed weeks later. Memory fades. Buyers go cold. Context disappears. The window for getting this right is short.

Any program worth running has to solve the bandwidth problem first. That means either dedicated analyst capacity, which most teams do not have, or automation that does the heavy lifting and flags what needs human attention.

A note on AI in deal analysis

AI can read every email, call, and CRM record in a deal faster than any analyst. That capability is real and valuable.

What AI does badly, without the right structure, is know what it does not know.

Feed a raw transcript into a language model and ask it why you lost the deal. It will give you a confident, coherent answer. It will also fill gaps with plausible inference and present the whole thing as if every claim is equally grounded.

The fix is not to avoid AI. It is to use it skeptically and in steps. Extract what each source actually says before asking for a synthesis. Make the model flag conflicts explicitly rather than resolve them silently. Require source attribution for every claim in the output. Have it review its own conclusions against the raw sources before finalizing.

Structured inputs produce reliable outputs. Unstructured inputs produce hallucinations that read like insights.

We cover how to format deal records for AI agents specifically in Section 09.

“Gong tells me how often things come up. Hindsight tells me how the win rate changes when we talk about that topic. It's been a tremendous unlock.”

Interviewing Sales Reps

The rep knows things no system captured.

How the buyer reacted when pricing came up. Whether the champion seemed confident in the internal review or visibly uncertain. The moment the deal felt like it was slipping. What they would have done differently if they could run it again.

None of that is in Gong. None of it is in the CRM. It lives in the rep's memory, and it starts degrading the moment the deal closes.

The rep interview has one job: get that out while it is still accurate.

What you are trying to learn

A rep interview serves three purposes, in order.

First, get their account of what happened before memory fades. The rep was in the room. They felt the shifts in momentum, noticed when a stakeholder went quiet, and picked up signals that never made it into a note. That account is a primary source. Treat it like one.

Second, surface what never made it into any system. Side conversations. Hallway comments. The procurement contact who mentioned a competitor's pricing in passing. The buyer's body language in the final call. The thing the rep almost put in the CRM but did not because it felt too vague. These are often the most important data points in the deal.

Third, calibrate against what the data already shows. If the CRM says pricing and the transcript never mentions price, the rep interview is where you resolve that. Not by accepting the rep's account uncritically, but by using it alongside everything else to build a more accurate picture.

Why rep interviews go wrong

Most rep interviews produce bad data for the same reasons.

Reps rationalize losses. The story they tell is the story they told their manager, shaped by what felt defensible rather than what actually happened. Pricing. Product gaps. Bad timing. These are the answers that externalize the loss and end the conversation quickly.

Pressure makes it worse. A rep interviewed by their manager, or anyone they report to, will give a managed answer. The dynamic is wrong before the first question is asked.

Live interviews compound the problem. In a conversation, reps perform. They fill silence. They commit to a narrative early and defend it. The story hardens before you can probe it.

The format matters as much as the questions.

Run it async, in Slack, within 24 hours

The rep interview should feel like a message, not an interrogation.

Async removes the performance pressure of a live call. Slack or a similar channel feels low-stakes. The rep can answer when they are ready, think before they respond, and say something honest without a manager watching their face.

Timing is critical. Within 24 hours of close, the deal is still vivid. The buyer's reactions, the final conversation, the feeling in the room are all accessible. Wait a week and you get a reconstructed account. Wait a month and you get a category.

Do not have the rep's manager run it. Ideally, do not have a human run it at all. An AI agent in Slack sends the interview automatically at close, asks deal-specific questions, and removes the interpersonal weight entirely. The rep is not managing up. They are just answering questions about a deal.

Keep it short or lose them

A rep interview that takes ten minutes will get skipped. One that takes fifteen will get ignored after the first week. One that takes two minutes in Slack, on their phone, between calls, will actually get filled out.

The entire interview should fit in a single Slack thread. Five questions maximum. Two to three is better. Every question should be answerable in two sentences. The rep should be able to complete it during a coffee break without opening a laptop.

Do not ask anything they already entered in the CRM. If outcome, competitor, and loss reason are already logged, do not ask again. That redundancy signals that nobody read what they wrote, which signals that nobody will read this either. Reps notice. They check out.

The questions should only cover what no system can capture. Reactions. Judgment calls. What they would do differently. Things that require a human who was in the deal to answer.

Ideally, the rep's answers flow directly back into the deal record and CRM without them having to enter anything twice. The interview is a conversation, not a data entry task. If the output requires the rep to then go update Salesforce manually, you have added work instead of replacing it. The right setup captures their answers, structures them automatically, and populates the relevant fields. The rep answers once. The record updates itself.

That is the version reps will actually use.

The questions that actually work

Generic questions produce generic answers. Every question should be specific to the deal and designed to surface something no system captured.

Questions that work:

"What could we have done earlier in the cycle to improve this outcome?"

This question is retrospective without being accusatory. It invites the rep to think critically about the process rather than defend the result. The answers frequently surface coaching opportunities, competitive gaps, and product issues that never appeared in the deal record.

"How did the buyer react when [specific moment] came up?"

Reaction questions get at the human signal underneath the data. How did the buyer respond when pricing was introduced? When the competitor came up? When the timeline slipped? Reaction is what the transcript cannot capture. The rep can.

"What do you think the buyer was really deciding between at the end?"

This question bypasses the official evaluation criteria and gets at the buyer's actual mental model. Reps who were close to the deal usually know. And what they say is frequently different from what ended up in the CRM.

"Was there a moment where you felt the deal shift? What happened?"

Most lost deals have a turning point. A conversation that changed the dynamic. A stakeholder who went cold. A competitor move that landed. Reps know when it happened even if they cannot always explain why. This question surfaces it.

"What would you tell the next rep going into a deal with this type of buyer?"

This question converts the individual loss into institutional knowledge. The answer belongs in your battlecards and your onboarding materials, not just the deal record.

What to do with the answers

Rep interview responses are a primary source. Tag them as such in the deal record.

Cross-reference against what the data already shows. Where the rep's account matches the transcript and CRM, confidence in that data point goes up. Where it conflicts, flag it. The conflict itself is signal. It means something was either misread in the data or managed in the interview.

Feed the gaps the rep surfaces directly into the buyer interview brief. If the rep said the champion seemed uncertain in the final week, the buyer interview should probe that specifically. The two interviews are designed to work together, not in isolation.

Scheduling Buyer Interviews

The buyer made a decision. They know exactly why. Getting them to tell you is the hard part.

Most teams blame low response rates on buyer disinterest. The real problem is almost always execution. Who reached out, when, how, and what they were asking the buyer to do. Fix those four things and response rates improve dramatically.

The biggest lever: a warm introduction

The single most effective thing you can do is get the seller to make the introduction.

Not to conduct the interview. Not to be involved in it at all. Just to send one message to the buyer saying someone from the product or leadership team will be reaching out to hear their perspective on the evaluation.

That message does two things. It signals that the outreach is legitimate, not spam. And it signals that the company takes the feedback seriously enough to have leadership involved. Buyers who would ignore a cold email will respond to something their rep flagged.

The seller's involvement ends at the introduction. What comes next has to feel entirely separate from the revenue relationship.

Who reaches out matters as much as when

Never have anyone in the sales org conduct or request a buyer interview.

Buyers know what a repitch looks like. An email from the account executive or their manager asking to reconnect about the evaluation reads as exactly that, regardless of what the subject line says. The buyer declines or ignores it. The data stays dark.

The outreach should come from product, market research, or senior leadership outside the revenue org. Someone whose involvement signals genuine curiosity about the decision rather than an attempt to reopen it.

If that person does not exist or does not have bandwidth, an AI interview agent is the next best option. A well-framed AI interview removes the interpersonal dynamic entirely. The buyer is not managing a relationship. They are answering questions. That changes what they are willing to say.

Timing

Reach out within 48 hours of close.

The evaluation is still fresh. The buyer has not fully moved on. They made a decision they thought carefully about and there is usually a window where they are willing to talk about it, especially if the ask is low effort.

Wait a week and that window starts closing. Wait a month and most buyers cannot reconstruct the nuance of why they decided what they decided. You get a category, not a story.

For won deals, the same timing applies. A buyer who just selected you is at peak engagement. That is the moment to understand what actually drove the decision, not six months into implementation when the context has faded.

Follow up once

15% of completed interviews come from a follow-up message.

Most teams send one email and move on. Send a second. Wait five to seven days after the first outreach. Keep it short. One sentence acknowledging they are busy, one sentence restating the ask, one sentence with the link.

That is it. Do not send a third. Two touches is persistence. Three is pressure, and pressure kills the goodwill that makes a buyer want to respond in the first place.

The follow-up works because timing is unpredictable. The first message arrived on a bad day. The buyer meant to respond and forgot. A second nudge at the right moment is the difference between a completed interview and a permanent gap in your deal record.

Make the ask as easy as possible

Response rates improve when the path of least resistance is still useful.

Offer two options in every outreach. A longer format, a 15-minute call or a full AI conversation, for buyers willing to go deep. And a shorter fallback, a two to three minute async option, for buyers who want to help but cannot commit to more.

The fallback matters. A buyer who would have ignored a calendar invite will sometimes answer three quick questions in an async chat. That response is still valuable. Do not optimize only for the full interview at the cost of losing everyone who will not do it.

Incentives

Incentives improve response rates. Offer them.

The right incentive depends on who you are asking and what you are asking them to do.

For a 15-minute call with a senior buyer, a $50 to $100 Amazon gift card or a charity donation in their name is appropriate. For a two-minute async response, $20 to $25 is enough. Matching the incentive to the effort level signals that you understand their time is valuable.

Amazon direct redeem codes work well because they require no account creation and no claiming process. The simpler the redemption, the less friction between the interview and the reward.

For very senior buyers, a charity donation often lands better than a personal gift card. It removes any awkwardness around accepting something and positions the gesture differently.

Do not skip the incentive to save money. The data you get from even one additional buyer interview is worth multiples of a $50 gift card.

Conducting the interview

The format matters less than the context you bring into it.

Whether the buyer chose a call, an AI conversation, or an async survey, the goal is the same: understand what they decided and why, at a level of specificity that goes beyond what they put in an evaluation scorecard.

Go in with context on the deal. Know what the rep said happened. Know what the data shows. Know where the gaps are. A buyer who feels like they are being asked to repeat everything they already communicated during the evaluation will disengage. A buyer who feels like the interviewer actually knows the deal and is just trying to understand a few specific things will open up.

Start with the decision itself before probing the reasons. What did they choose? How confident are they in it? Then work backward into why. The ladder methodology, moving from surface-level reasons to deeper motivations by asking what mattered about each factor, is a useful framework here. You are not just trying to hear "integration was important." You are trying to understand why integration was the deciding factor when it might have been manageable with a different sales approach or a different product roadmap call.

Ask about the experience, not just the outcome. How did they feel about each vendor they evaluated? What surprised them? What would have changed their decision? These questions surface things that structured criteria never capture.

Won deals

Interview winners too.

Most teams skip this because it feels unnecessary. You won. You know what worked.

You do not, actually. You know what the rep thinks worked. You know what came up in calls. You do not know what the buyer weighted most heavily in the final decision, which part of your pitch landed and which they ignored, or what almost made them go a different direction.

Win interviews are also the source material for your best proof points. A buyer who tells you exactly why they chose you over a specific competitor is giving you a battlecard, a case study, and a coaching note for your reps all at once.

Won deal interviews are sometimes handled by customer success or implementation teams who are already building the relationship post-close. That works. The important thing is that the data from those conversations flows back into the same deal record, structured the same way, so it can be analyzed alongside everything else.

“I'm getting insights from deals that are being analyzed every day — pulling from Salesforce, Gong, and now win-loss interviews. My reps are going into deals with the most up-to-date information.”

Competitive Benchmarking

Coming soon.

Distributing Insights

Coming soon.

Build vs. Buy

Coming soon.

| Factor | Traditional | DIY | Hindsight |

|---|---|---|---|

| Deal coverage | 5–15% of closed deals | Depends on rep compliance | 100% of closed deals |

| Time to insight | 4–8 weeks per cycle | Varies widely | Within 12 hours |

| Buyer interviews | Analyst-scheduled, limited | Manual outreach, low response | AI-conducted, 31% avg response |

| Source triangulation | CRM only | CRM + calls (if configured) | CRM + calls + interviews |

| Competitive benchmarking | Quarterly report | Manual aggregation | Real-time, deal-level |

| Rep bias removal | Analyst judgment | None by default | Verified against buyer record |

| AI-ready output | PDF reports | Unstructured notes | Structured, queryable schema |

| Setup time | Weeks of onboarding | Months of engineering | Days |

| Cost | $50K–$250K / year | Engineering time + tooling | Starts at $899/mo |

Synthesizing for AI Agents

Coming soon.

“We'd lose a $400,000 deal and it would be like, 'client wasn't interested at this time.' Hindsight lets us run off of the quant data and say, look, this is what's happening. There's no debate.”

Start Now

Ready to see it in your own deal data?

Connect your CRM and get your first verified deal analysis within hours. No setup fees. No analyst to hire.